『如何在linux共享文件 ,搭建elk直接看第二部分』

- 新增用户a b c

adduser a

adduser b

adduser c - 新增用户组 A

groupadd developteam - 将用户a b c 加入 组

usermod -a -G developteam hadoop

usermod -a -G developteam hbase

usermod -a -G developteam hive - 设置umask 022

umask

umask 022 - root创建文件夹

mkdir -p /tmp/project - 设置属组A

chown -R :developteam /tmp/project/

设置组权限

chmod -R g+w /tmp/project/ - sgid

chmod -R g+s /tmp/project/

给文件添加了SGID之后文件的属组变成了文件本身的属组,而不是文件的创建者

其他用户可以对文件进行操作:

为防止其他用户的删除操作,对文件添加stick权限 【慎重使用,在git等操作中不要用这个】

chmod -R o+t /tmp/project/

给文件添加SGID 和stick之后其他用户可以对文件目录都有写的权限但是不能删除其他用户创建的文件。

『第二部分记录搭建elk』

使用

- ubuntu 22

- docker

创建docker network

docker network create elastic

三个镜像

docker pull elasticsearch:7.8.0

docker pull logstash:7.8.0

docker pull kibana:7.8.0

elasticsearch 启动!!!

docker run -d --name es01 --net elastic -p 9200:9200 -e "discovery.type=single-node" 121454ddad72[your image id]es config

进入容器修改 cinfig下的elasticsearch.yml

cluster.name: "docker-cluster"

network.host: 0.0.0.0

xpack.security.enabled: true

xpack.security.transport.ssl.enabled: true退出容器后,重新启动容器

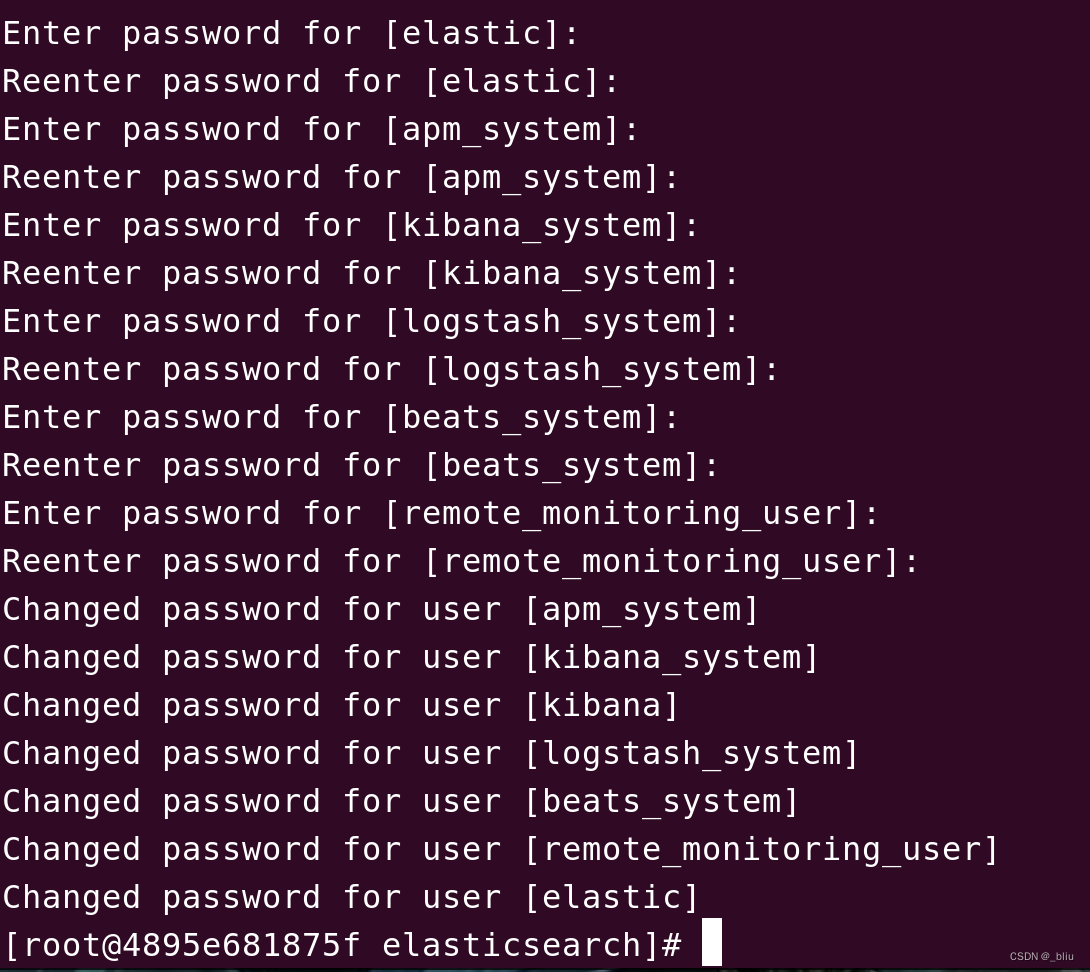

再次进入容器,设置安全认证密码。

/usr/share/elasticsearch/bin/elasticsearch-setup-passwords interactive查看是否监听端口9200

ss | grep 9200

访问地地址

http://localhost:9200/

可以遇到的问题:

ElasticSearch 容器中出現的 max virtual memory areas

参考以下

>> 解決 ElasticSearch 容器中出現的 max virtual memory areas

只需要在裡面加上下面這行就行了:

/etc/sysctl.conf

vm.max_map_count=262144

要確認設定有沒有成功,可以透過 sysctl -p 這個指令來驗證。

访问localhost:9200 显示如下,就成功了

{"name" : "4895e681875f","cluster_name" : "docker-cluster","cluster_uuid" : "C8NC8FWcSkqUv9x5M5pGVQ","version" : {"number" : "7.8.0","build_flavor" : "default","build_type" : "docker","build_hash" : "757314695644ea9a1dc2fecd26d1a43856725e65","build_date" : "2020-06-14T19:35:50.234439Z","build_snapshot" : false,"lucene_version" : "8.5.1","minimum_wire_compatibility_version" : "6.8.0","minimum_index_compatibility_version" : "6.0.0-beta1"},"tagline" : "You Know, for Search"

}

好家伙这么多密码

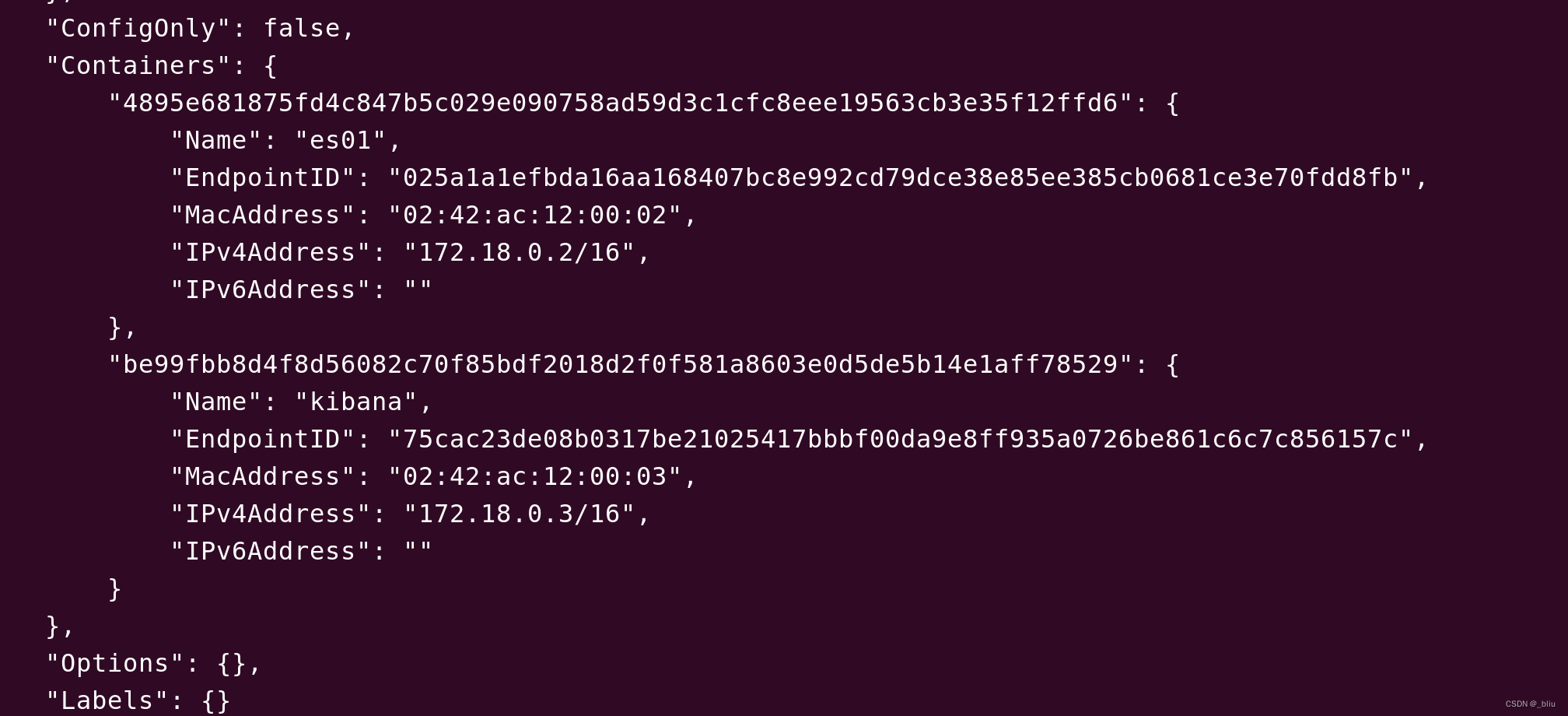

查看网络

查看网络

2. 安装 kibana

docker run -d --name kibana --net elastic -p 5601:5601 -v /data/kibana/kibana.yml:/usr/share/kibana/config/kibana.yml kibana:7.8.0

如果遇到:

FATAL Error: [config validation of [xpack.reporting].encryptionKey]: value has length [11] but it must have a minimum length of [32].进入容器修改配置

- 修改elasticsearch.hosts 根据你的实际情况

- 使用更长的字符 > 32 『你自己随意设置哈』

- 修改 elasticsearch.password为刚才你设置的密码

#

# ** THIS IS AN AUTO-GENERATED FILE **

## Default Kibana configuration for docker target

server.name: kibana

server.host: "0"

elasticsearch.hosts: [ "http://172.18.0.2:9200" ]

monitoring.ui.container.elasticsearch.enabled: true

i18n.locale: "zh-CN"

elasticsearch.username: "elastic"

elasticsearch.password: "123456"

xpack.reporting.encryptionKey: "sdfbwe83q12gsdfgsdvfshjfvashfn34y372rh32gru32yre32erh2u3hrlibsdfjefe"

xpack.security.encryptionKey: "hsaufhsabfasbhdsbfjbsjhfbshjbfsbfcshjdbf623423gjh234v32hv4h32h3vrhj"

修改完成后,退出并重启容器

- 安装logstash

创建logstash 的配置文件

logstash.yml配置如下:

http.host: "0.0.0.0"xpack.monitoring.elasticsearch.hosts: [ "http://172.19.0.2:9200" ]xpack.monitoring.enabled: truepath.config: /usr/share/logstash/conf.d/*.confpath.logs: /var/log/logstash其中xpack.monitoring.elasticsearch.hosts配置项改为获取到的elasticsearch容器地址

logstash.conf的配置如下:

input {tcp {mode => "server"host => "0.0.0.0"port => 5047codec => json_lines}}output {elasticsearch {hosts => "172.19.0.2:9200"index => "springboot-logstash-%{+YYYY.MM.dd}"user => "elastic"password => "123456"}}下面host填写elastsearch容器地址,index为日志索引的名称,user和password填写xpack设置的密码,port为对外开放收集日志的端口

运行logstash

docker run -it -d -p 5047:5047 -p 9600:9600 \

--name logstash --privileged=true --net elastic -v \

/data/logstash/config/logstash.yml:/usr/share/logstash/config/logstash.yml -v \

/data/logstash/conf.d/:/usr/share/logstash/conf.d/ logstash:7.8.0

5047端口是刚才port设置的端口

- 创建springboot 工程

加入依赖

<dependency><groupId>net.logstash.logback</groupId><artifactId>logstash-logback-encoder</artifactId><version>6.6</version></dependency>

logback-spring.xml

<?xml version="1.0" encoding="UTF-8"?><configuration debug="true"><!-- 获取spring配置 --><springProperty scope="context" name="logPath" source="log.path" defaultValue="/app/log/elk-biz"/><springProperty scope="context" name="appName" source="spring.application.name"/><!-- 定义变量值的标签 --><property name="LOG_HOME" value="${logPath}"/><property name="SPRING_NAME" value="${appName}"/><!-- 彩色日志依赖的渲染类 --><conversionRule conversionWord="clr" converterClass="org.springframework.boot.logging.logback.ColorConverter"/><conversionRule conversionWord="wex"converterClass="org.springframework.boot.logging.logback.WhitespaceThrowableProxyConverter"/><conversionRule conversionWord="wEx"converterClass="org.springframework.boot.logging.logback.ExtendedWhitespaceThrowableProxyConverter"/><!-- 链路追踪sleuth 格式化输出 以及 控制台颜色设置变量 --><property name="CONSOLE_LOG_PATTERN"value="%d{yyyy-MM-dd HH:mm:ss.SSS} %highlight(%-5level) [${appName},%yellow(%X{X-B3-TraceId}),%green(%X{X-B3-SpanId}),%blue(%X{X-B3-ParentSpanId})] [%yellow(%thread)] %green(%logger:%L) :%msg%n"/><!-- #############################################定义日志输出格式以及输出位置########################################## --><!--控制台输出设置--><appender name="STDOUT" class="ch.qos.logback.core.ConsoleAppender"><encoder class="ch.qos.logback.classic.encoder.PatternLayoutEncoder"><pattern>${CONSOLE_LOG_PATTERN}</pattern><!-- <charset>GBK</charset> --></encoder></appender><!--普通文件输出设置--><appender name="FILE" class="ch.qos.logback.core.rolling.RollingFileAppender"><rollingPolicy class="ch.qos.logback.core.rolling.TimeBasedRollingPolicy"><FileNamePattern>${LOG_HOME}/log_${SPRING_NAME}_%d{yyyy-MM-dd}_%i.log</FileNamePattern><timeBasedFileNamingAndTriggeringPolicy class="ch.qos.logback.core.rolling.SizeAndTimeBasedFNATP"><maxFileSize>200MB</maxFileSize></timeBasedFileNamingAndTriggeringPolicy></rollingPolicy><encoder class="ch.qos.logback.classic.encoder.PatternLayoutEncoder"><pattern>%d{yyyy-MM-dd HH:mm:ss.SSS} [%thread] %-5level %logger{50} - %msg%n</pattern></encoder></appender><!--aop接口日志拦截文件输出--><appender name="bizAppender" class="ch.qos.logback.core.rolling.RollingFileAppender"><rollingPolicy class="ch.qos.logback.core.rolling.TimeBasedRollingPolicy"><FileNamePattern>${LOG_HOME}/log_%d{yyyy-MM-dd}_%i.log</FileNamePattern><timeBasedFileNamingAndTriggeringPolicy class="ch.qos.logback.core.rolling.SizeAndTimeBasedFNATP"><maxFileSize>200MB</maxFileSize></timeBasedFileNamingAndTriggeringPolicy></rollingPolicy><encoder class="ch.qos.logback.classic.encoder.PatternLayoutEncoder"><pattern>%d{yyyy-MM-dd HH:mm:ss.SSS} [%thread] %-5level %logger{50} - %msg%n</pattern></encoder></appender><!--开启tcp格式的logstash传输,通过TCP协议连接Logstash--><!-- <appender name="STASH" class="net.logstash.logback.appender.LogstashTcpSocketAppender">--><!-- <destination>10.11.74.123:9600</destination>--><!-- <encoder class="net.logstash.logback.encoder.LogstashEncoder" />--><!-- </appender>--><appender name="LOGSTASH" class="net.logstash.logback.appender.LogstashTcpSocketAppender"><!--可以访问的logstash日志收集端口--><destination>localhost:5047</destination><encoder charset="UTF-8" class="net.logstash.logback.encoder.LogstashEncoder"/><encoder class="net.logstash.logback.encoder.LoggingEventCompositeJsonEncoder"><providers><timestamp><timeZone>Asia/Shanghai</timeZone></timestamp><pattern><pattern>{"app_name":"${SPRING_NAME}","traceid":"%X{traceid}","ip": "%X{ip}","server_name": "%X{server_name}","level": "%level","trace": "%X{X-B3-TraceId:-}","span": "%X{X-B3-SpanId:-}","parent": "%X{X-B3-ParentSpanId:-}","thread": "%thread","class": "%logger{40} - %M:%L","message": "%message","stack_trace": "%exception{10}"}</pattern></pattern></providers></encoder></appender><!-- #############################################设置输出日志输出等级########################################## --><!-- mybatis log configure--><!-- logger设置某一个包或者具体的某一个类的日志打印级别 --><logger name="com.apache.ibatis" level="TRACE"/><logger name="java.sql.Connection" level="DEBUG"/><logger name="java.sql.Statement" level="DEBUG"/><logger name="java.sql.PreparedStatement" level="DEBUG"/><logger name="org.apache.ibatis.logging.stdout.StdOutImpl" level="DEBUG"/><!-- SaveLogAspect log configure外部接口调用--><!-- logger设置某一个包或者具体的某一个类的日志打印级别 --><logger name="com.springweb.baseweb.log.aop.SaveLogAspect" additivity="false" level="INFO"><!-- 同时输出到两个文件 --><appender-ref ref="bizAppender"/><appender-ref ref="FILE"/></logger><root level="INFO"><!-- 默认日志文件输出 --><appender-ref ref="FILE"/><appender-ref ref="STDOUT"/><!-- 默认日志文件输出logstash --><appender-ref ref="LOGSTASH"/></root></configuration>增加一个web端点

加入日志

@RestController

@RequestMapping("/")

@SpringBootApplication

public class ElkApplication {public static void main(String[] args) {SpringApplication.run(ElkApplication.class, args);}private final Logger logger = LoggerFactory.getLogger(ElkApplication.class);@GetMapping("")public void test() {logger.warn("警告 哈哈");logger.info("普通日志");logger.debug("debug 日志");logger.error("出错拉");}

}登陆

http://localhost:5601/ 查看

参考:

https://juejin.cn/post/7057767570891341861

https://blog.csdn.net/u010554294/article/details/88996397

https://ouch1978.github.io/docs/containerization/trouble-shooting/fix-vm-max-map-count-is-too-low

:环境配置)

复用和信道编码(一))