一、XTuner安装

1、代码准备

mkdir project

cd project

git clone https://github.com/InternLM/xtuner.git2、环境准备

cd xtuner

pip install -r requirements.txt

#从源码安装

pip install -e '.[all]'3、查看配置文件列表

XTuner 提供多个开箱即用的配置文件,用户可以通过下列命令查看:

#列出所有内置配置文件

xtuner list-cfg

#列出internlm2大模型的相关配置文件

xtuner list-cfg | grep internlm2二、大模型微调步骤

1、大模型下载

cd /root/share/model_repos/

git clone https://www.modelscope.cn/Shanghai_AI_Laboratory/internlm2-chat-7b.git2、微调数据准备

2.1、获取原始数据

以Medication_QA数据为例:

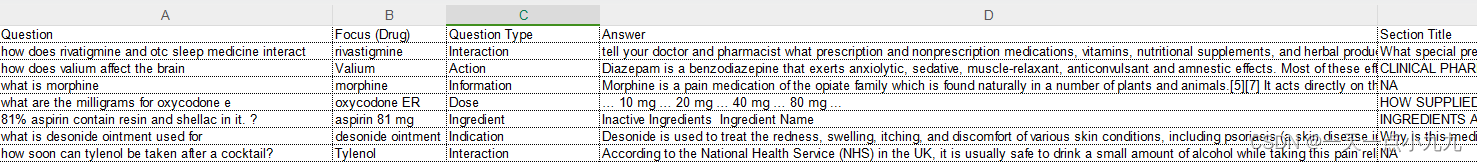

原始数据格式:

取其中的“Question”和“Answer”来做数据集

2.2、将数据转为 XTuner 的数据格式

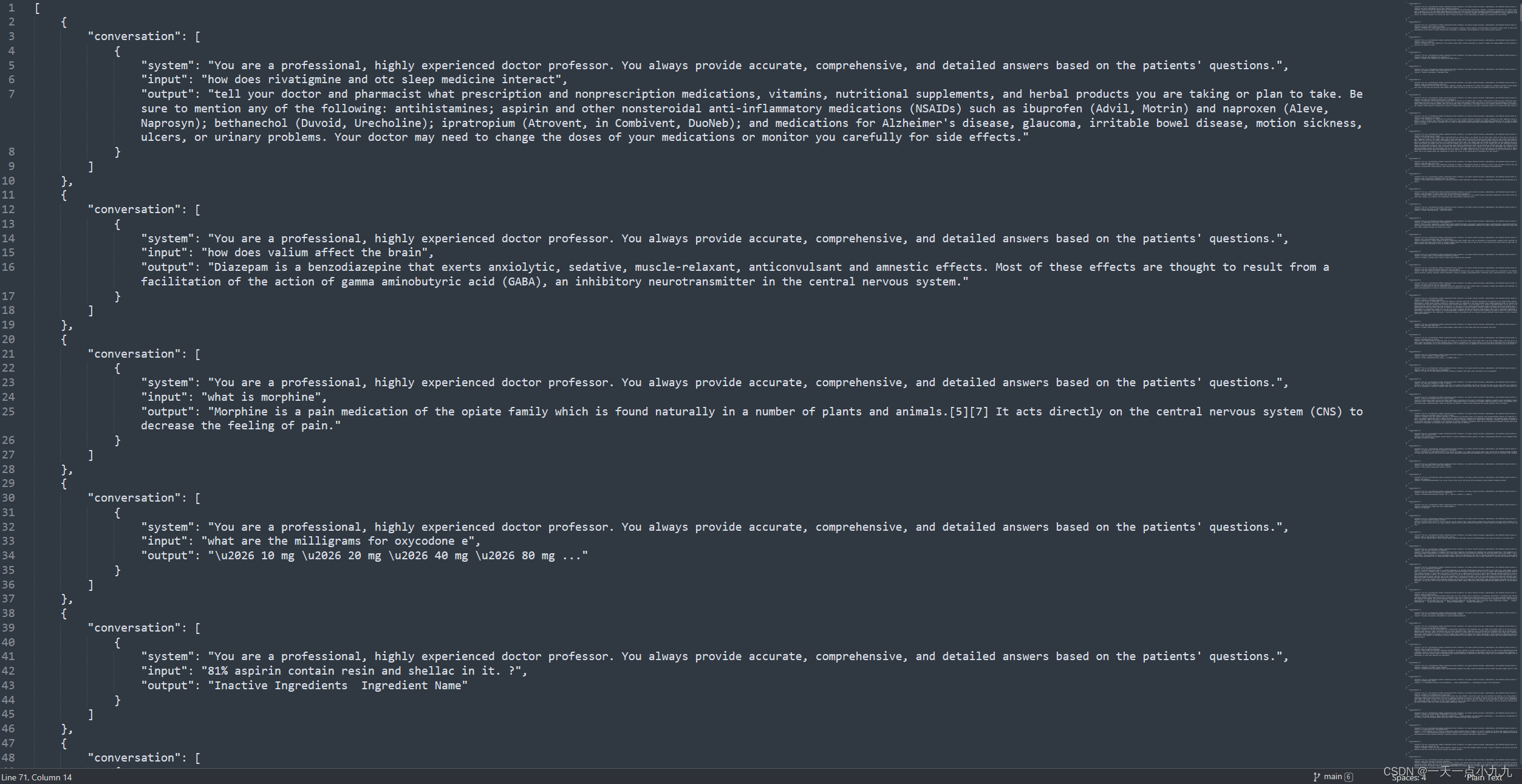

XTuner的数据格式(.jsonl)

[{"conversation":[{"system": "xxx","input": "xxx","output": "xxx"}]

},

{"conversation":[{"system": "xxx","input": "xxx","output": "xxx"}]

}]每一条原始数据对应一条“conversation”,对应关系为:

input 对应 Question

output 对应 Answer

system 全部设置为“You are a professional, highly experienced doctor professor. You always provide accurate, comprehensive, and detailed answers based on the patients' questions.”

格式化后的数据:

数据转换代码如下(xlsx2jsonl.py):

import openpyxl

import jsondef process_excel_to_json(input_file, output_file):# Load the workbookwb = openpyxl.load_workbook(input_file)# Select the "DrugQA" sheetsheet = wb["DrugQA"]# Initialize the output data structureoutput_data = []# Iterate through each row in column A and Dfor row in sheet.iter_rows(min_row=2, max_col=4, values_only=True):system_value = "You are a professional, highly experienced doctor professor. You always provide accurate, comprehensive, and detailed answers based on the patients' questions."# Create the conversation dictionaryconversation = {"system": system_value,"input": row[0],"output": row[3]}# Append the conversation to the output dataoutput_data.append({"conversation": [conversation]})# Write the output data to a JSON filewith open(output_file, 'w', encoding='utf-8') as json_file:json.dump(output_data, json_file, indent=4)print(f"Conversion complete. Output written to {output_file}")# Replace 'MedQA2019.xlsx' and 'output.jsonl' with your actual input and output file names

process_excel_to_json('MedInfo2019-QA-Medications.xlsx', 'output.jsonl')2.3、切分出训练集和测试集(7:3)

import json

import randomdef split_conversations(input_file, train_output_file, test_output_file):# Read the input JSONL filewith open(input_file, 'r', encoding='utf-8') as jsonl_file:data = json.load(jsonl_file)# Count the number of conversation elementsnum_conversations = len(data)# Shuffle the data randomlyrandom.shuffle(data)random.shuffle(data)random.shuffle(data)# Calculate the split points for train and testsplit_point = int(num_conversations * 0.7)# Split the data into train and testtrain_data = data[:split_point]test_data = data[split_point:]# Write the train data to a new JSONL filewith open(train_output_file, 'w', encoding='utf-8') as train_jsonl_file:json.dump(train_data, train_jsonl_file, indent=4)# Write the test data to a new JSONL filewith open(test_output_file, 'w', encoding='utf-8') as test_jsonl_file:json.dump(test_data, test_jsonl_file, indent=4)print(f"Split complete. Train data written to {train_output_file}, Test data written to {test_output_file}")# Replace 'input.jsonl', 'train.jsonl', and 'test.jsonl' with your actual file names

split_conversations('output.jsonl', 'MedQA2019-structured-train.jsonl', 'MedQA2019-structured-test.jsonl')3、配置文件准备

根据所选用的大模型下载对应的配置文件

xtuner copy-cfg internlm2_chat_7b_qlora_oasst1_e3_copy.py .# 修改import部分

- from xtuner.dataset.map_fns import oasst1_map_fn, template_map_fn_factory

+ from xtuner.dataset.map_fns import template_map_fn_factory# 修改模型为本地路径

- pretrained_model_name_or_path = 'internlm/internlm-chat-7b'

+ pretrained_model_name_or_path = '/root/share/model_repos/internlm2-chat-7b'# 修改训练数据为 MedQA2019-structured-train.jsonl 路径

- data_path = 'timdettmers/openassistant-guanaco'

+ data_path = 'MedQA2019-structured-train.jsonl'# 修改 train_dataset 对象

train_dataset = dict(type=process_hf_dataset,

- dataset=dict(type=load_dataset, path=data_path),

+ dataset=dict(type=load_dataset, path='json', data_files=dict(train=data_path)),tokenizer=tokenizer,max_length=max_length,

- dataset_map_fn=alpaca_map_fn,

+ dataset_map_fn=None,template_map_fn=dict(type=template_map_fn_factory, template=prompt_template),remove_unused_columns=True,shuffle_before_pack=True,pack_to_max_length=pack_to_max_length)4、启动微调

xtuner train internlm2_chat_7b_qlora_oasst1_e3_copy.py --deepspeed deepspeed_zero25、将得到的 PTH 模型转换为 HuggingFace 模型,即:生成 Adapter 文件夹

mkdir hf

export MKL_SERVICE_FORCE_INTEL=1

export MKL_THREADING_LAYER=GNU

xtuner convert pth_to_hf internlm2_chat_7b_qlora_oasst1_e3_copy.py ./work_dirs/internlm2_chat_7b_qlora_oasst1_e3_copy/iter_96.pth ./hfhf 文件夹即为我们平时所理解的所谓 “LoRA 模型文件”

6、将 HuggingFace adapter 合并到大语言模型:

xtuner convert merge /root/share/model_repos/internlm2-chat-7b ./hf ./merged --max-shard-size 2GB

# xtuner convert merge \

# ${NAME_OR_PATH_TO_LLM} \

# ${NAME_OR_PATH_TO_ADAPTER} \

# ${SAVE_PATH} \

# --max-shard-size 2GB./merged 文件夹中既微调后的大模型,使用方法和原模型一样(internlm2-chat-7b)

)

、时钟偏斜(skew)概念讲解)

|Day42(动态规划))

)

)