先简单介绍一下partitioner 和 combiner

Partitioner类

- 用于在Map端对key进行分区

- 默认使用的是HashPartitioner

- 获取key的哈希值

- 使用key的哈希值对Reduce任务数求模

- 决定每条记录应该送到哪个Reducer处理

- 默认使用的是HashPartitioner

- 自定义Partitioner

- 继承抽象类Partitioner,重写getPartition方法

- job.setPartitionerClass(MyPartitioner.class)

Combiner类

- Combiner相当于本地化的Reduce操作

- 在shuffle之前进行本地聚合

- 用于性能优化,可选项

- 输入和输出类型一致

- Reducer可以被用作Combiner的条件

- 符合交换律和结合律

- 实现Combiner

- job.setCombinerClass(WCReducer.class)

我们进入案例来看这两个知识点

一 案例需求

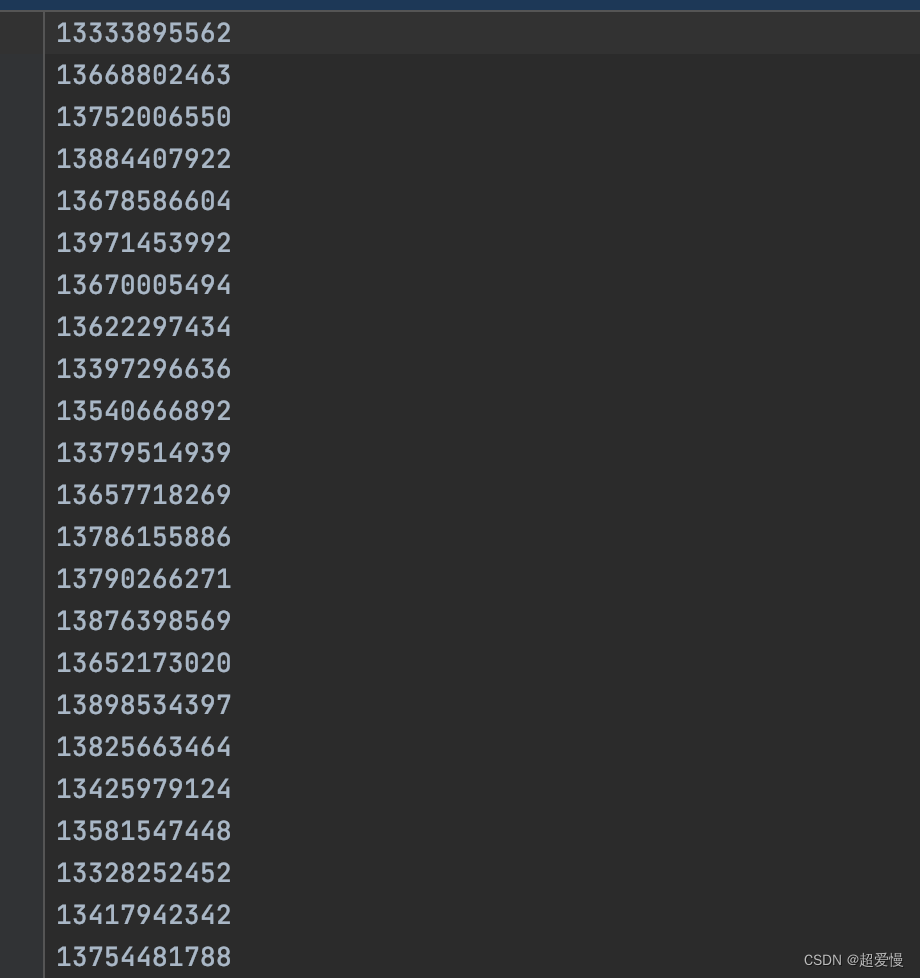

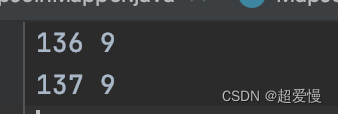

一个存放电话号码的文本,我们需要136 137,138 139和其它开头的号码分开存放统计其每个数字开头的号码个数

二 PhoneMapper 类

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;import java.io.IOException;public class PhoneMapper extends Mapper<LongWritable, Text,Text, IntWritable> {@Overrideprotected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {String phone = value.toString();Text text = new Text(phone);IntWritable intWritable = new IntWritable(1);context.write(text,intWritable);}

}

三 PhoneReducer 类

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;import java.io.IOException;public class PhoneReducer extends Reducer<Text, IntWritable,Text,IntWritable> {@Overrideprotected void reduce(Text key, Iterable<IntWritable> values, Context context) throws IOException, InterruptedException {int count = 0;for (IntWritable intWritable : values){count += intWritable.get();}context.write(key, new IntWritable(count));}

}

四 PhonePartitioner 类

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Partitioner;public class PhonePartitioner extends Partitioner<Text, IntWritable> {@Overridepublic int getPartition(Text text, IntWritable intWritable, int i) {//136,137 138,139 其它号码放一起if("136".equals(text.toString().substring(0,3)) || "137".equals(text.toString().substring(0,3))){return 0;}else if ("138".equals(text.toString().substring(0,3)) || "139".equals(text.toString().substring(0,3))){return 1;}else {return 2;}}

}

五 PhoneCombiner 类

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;import java.io.IOException;public class PhoneCombiner extends Reducer<Text, IntWritable,Text,IntWritable> {@Overrideprotected void reduce(Text key, Iterable<IntWritable> values, Context context) throws IOException, InterruptedException {int count = 0;for(IntWritable intWritable : values){count += intWritable.get();}context.write(new Text(key.toString().substring(0,3)), new IntWritable(count));}

}

六 PhoneDriver 类

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;import java.io.IOException;public class PhoneDriver {public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException {Configuration conf = new Configuration();Job job = Job.getInstance(conf);job.setJarByClass(PhoneDriver.class);job.setMapperClass(PhoneMapper.class);job.setMapOutputKeyClass(Text.class);job.setMapOutputValueClass(IntWritable.class);job.setCombinerClass(PhoneCombiner.class);job.setPartitionerClass(PhonePartitioner.class);job.setNumReduceTasks(3);job.setReducerClass(PhoneReducer.class);job.setOutputKeyClass(Text.class);job.setOutputValueClass(IntWritable.class);Path inPath = new Path("in/demo4/phone.csv");FileInputFormat.setInputPaths(job, inPath);Path outPath = new Path("out/out6");FileSystem fs = FileSystem.get(outPath.toUri(),conf);if (fs.exists(outPath)){fs.delete(outPath, true);}FileOutputFormat.setOutputPath(job, outPath);job.waitForCompletion(true);}

}

七 小结

该案例新知识点在于分区(partition)和结合(combine)

这次代码的流程是

driver——》mapper——》partitioner——》combiner——》reducer

map 每处理一条数据都经过一次 partitioner 分区然后存到环形缓存区中去,然后map再去处理下一条数据以此反复直至所有数据处理完成

combine 则是将环形缓存区溢出的缓存文件合并,并提前进行一次排序和计算(对每个溢出文件计算后再合并)最后将一个大的文件给到 reducer,这样大大减少了 reducer 的计算负担