目录

一、概述

二、监控平台架构图编辑

三、部署 Prometheus

3.1、Prometheus简介

3.2、部署守护进程node-exporter

3.3、部署rbac

3.4、ConfigMap

3.5、Deployment

3.6、Service

3.7、验证Prometheus

四、部署Grafana

4.1、Deployment

4.2、Service

4.3、Ingress

4.4、验证Grafana

一、概述

本文将介绍k8s的监控平台搭建,搭建一套完善的k8s监控平台可以帮助我们随时观察生产服务器的运行资源使用情况,例如:CPU、内存、磁盘、网络IO等,除了资源可视化之外,还可以设置监控预警功能,提高生产环境可用性和稳定性,提高排除故障的效率。

接下来,我们基于prometheus + grafana 的方式搭建一套k8s监控平台。

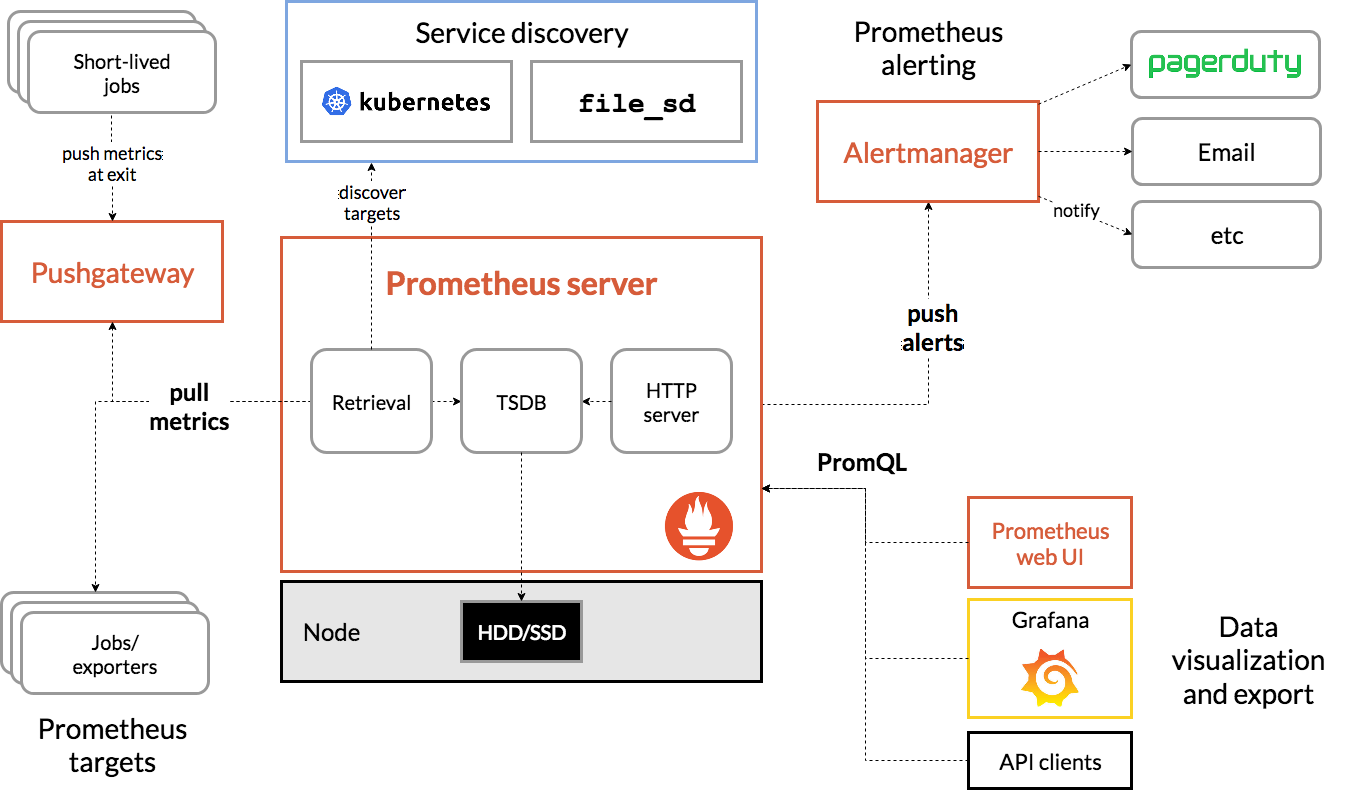

二、监控平台架构图

三、部署 Prometheus

3.1、Prometheus简介

Prometheus官方地址:Overview | Prometheus。

Prometheus是一个开源系统监控和警报工具包,最初由SoundCloud构建。自2012年Prometheus项目启动以来,许多公司和组织都采用了它,该项目拥有非常活跃的开发人员和用户社区。它现在是一个独立的开源项目,独立于任何公司进行维护。为了强调这一点,并澄清项目的治理结构,Prometheus于2016年加入了云原生计算基金会,成为继Kubernetes之后的第二个托管项目。

Prometheus主要有以下一些特点:

-

- 开源

- 监控、报警、数据库

- 以 HTTP 协议周期性抓取被监控组件状态

- 不需要复杂的集成过程,使用 http 接口接入即可

- 通过服务发现或静态配置发现目标

- 多种图形和仪表板支持模式

Prometheus架构图:

Prometheus组件说明:

- prometheus server:主服务,接受外部http请求,收集、存储与查询数据等

- prometheus targets: 静态收集的目标服务数据

- service discovery:动态发现服务

- prometheus alerting:报警通知

- pushgateway:数据收集代理服务器(类似于zabbix proxy)

- data visualization and export: 数据可视化与数据导出(访问客户端)

3.2、部署守护进程node-exporter

创建资源清单文件:vim node-exporter.yaml

## 下面就是yaml文件的具体配置内容

---

apiVersion: apps/v1

kind: DaemonSet # DaemonSet表示每个节点都会运行node-exporter

metadata:name: node-exporternamespace: kube-system # 命名空间labels:k8s-app: node-exporter

spec:selector:matchLabels:k8s-app: node-exportertemplate:metadata:labels:k8s-app: node-exporterspec:containers:- image: prom/node-exportername: node-exporterports:- containerPort: 9100protocol: TCPname: http---apiVersion: v1

kind: Service

metadata:labels:k8s-app: node-exportername: node-exporternamespace: kube-system

spec:ports:- name: httpport: 9100nodePort: 31672protocol: TCPtype: NodePort # 将node-exporter以NodePort方式暴露出来,端口是31672selector:k8s-app: node-exporter创建并查看pod、service:

$ kubectl create -f node-exporter.yaml

daemonset.apps/node-exporter created

service/node-exporter created$ kubectl get daemonset.apps/node-exporter -n kube-system

NAME DESIRED CURRENT READY UP-TO-DATE AVAILABLE NODE SELECTOR AGE

node-exporter 2 2 2 2 2 <none> 36s$ kubectl get service/node-exporter -n kube-system

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

node-exporter NodePort 10.99.26.203 <none> 9100:31672/TCP 48s3.3、部署rbac

创建rbac角色控制资源清单文件:vim rbac.yaml

##下面就是yaml文件的具体配置内容

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:name: prometheus

rules:

- apiGroups: [""]resources:- nodes- nodes/proxy- services- endpoints- podsverbs: ["get", "list", "watch"]

- apiGroups:- extensionsresources:- ingressesverbs: ["get", "list", "watch"]

- nonResourceURLs: ["/metrics"]verbs: ["get"]

---

apiVersion: v1

kind: ServiceAccount

metadata:name: prometheusnamespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:name: prometheus

roleRef:apiGroup: rbac.authorization.k8s.iokind: ClusterRolename: prometheus

subjects:

- kind: ServiceAccountname: prometheusnamespace: kube-system创建集群角色、用户、角色绑定关系:

$ kubectl create -f rbac.yaml

clusterrole.rbac.authorization.k8s.io/prometheus created

serviceaccount/prometheus created

clusterrolebinding.rbac.authorization.k8s.io/prometheus created3.4、ConfigMap

创建configmap资源清单: vim configmap.yaml

##下面就是yaml文件的具体配置内容

apiVersion: v1

kind: ConfigMap

metadata:name: prometheus-confignamespace: kube-system

data:prometheus.yml: |global:scrape_interval: 15sevaluation_interval: 15sscrape_configs:- job_name: 'kubernetes-apiservers'kubernetes_sd_configs:- role: endpointsscheme: httpstls_config:ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crtbearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/tokenrelabel_configs:- source_labels: [__meta_kubernetes_namespace, __meta_kubernetes_service_name, __meta_kubernetes_endpoint_port_name]action: keepregex: default;kubernetes;https- job_name: 'kubernetes-nodes'kubernetes_sd_configs:- role: nodescheme: httpstls_config:ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crtbearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/tokenrelabel_configs:- action: labelmapregex: __meta_kubernetes_node_label_(.+)- target_label: __address__replacement: kubernetes.default.svc:443- source_labels: [__meta_kubernetes_node_name]regex: (.+)target_label: __metrics_path__replacement: /api/v1/nodes/${1}/proxy/metrics- job_name: 'kubernetes-cadvisor'kubernetes_sd_configs:- role: nodescheme: httpstls_config:ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crtbearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/tokenrelabel_configs:- action: labelmapregex: __meta_kubernetes_node_label_(.+)- target_label: __address__replacement: kubernetes.default.svc:443- source_labels: [__meta_kubernetes_node_name]regex: (.+)target_label: __metrics_path__replacement: /api/v1/nodes/${1}/proxy/metrics/cadvisor- job_name: 'kubernetes-service-endpoints'kubernetes_sd_configs:- role: endpointsrelabel_configs:- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scrape]action: keepregex: true- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scheme]action: replacetarget_label: __scheme__regex: (https?)- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_path]action: replacetarget_label: __metrics_path__regex: (.+)- source_labels: [__address__, __meta_kubernetes_service_annotation_prometheus_io_port]action: replacetarget_label: __address__regex: ([^:]+)(?::\d+)?;(\d+)replacement: $1:$2- action: labelmapregex: __meta_kubernetes_service_label_(.+)- source_labels: [__meta_kubernetes_namespace]action: replacetarget_label: kubernetes_namespace- source_labels: [__meta_kubernetes_service_name]action: replacetarget_label: kubernetes_name- job_name: 'kubernetes-services'kubernetes_sd_configs:- role: servicemetrics_path: /probeparams:module: [http_2xx]relabel_configs:- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_probe]action: keepregex: true- source_labels: [__address__]target_label: __param_target- target_label: __address__replacement: blackbox-exporter.example.com:9115- source_labels: [__param_target]target_label: instance- action: labelmapregex: __meta_kubernetes_service_label_(.+)- source_labels: [__meta_kubernetes_namespace]target_label: kubernetes_namespace- source_labels: [__meta_kubernetes_service_name]target_label: kubernetes_name- job_name: 'kubernetes-ingresses'kubernetes_sd_configs:- role: ingressrelabel_configs:- source_labels: [__meta_kubernetes_ingress_annotation_prometheus_io_probe]action: keepregex: true- source_labels: [__meta_kubernetes_ingress_scheme,__address__,__meta_kubernetes_ingress_path]regex: (.+);(.+);(.+)replacement: ${1}://${2}${3}target_label: __param_target- target_label: __address__replacement: blackbox-exporter.example.com:9115- source_labels: [__param_target]target_label: instance- action: labelmapregex: __meta_kubernetes_ingress_label_(.+)- source_labels: [__meta_kubernetes_namespace]target_label: kubernetes_namespace- source_labels: [__meta_kubernetes_ingress_name]target_label: kubernetes_name- job_name: 'kubernetes-pods'kubernetes_sd_configs:- role: podrelabel_configs:- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_scrape]action: keepregex: true- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_path]action: replacetarget_label: __metrics_path__regex: (.+)- source_labels: [__address__, __meta_kubernetes_pod_annotation_prometheus_io_port]action: replaceregex: ([^:]+)(?::\d+)?;(\d+)replacement: $1:$2target_label: __address__- action: labelmapregex: __meta_kubernetes_pod_label_(.+)- source_labels: [__meta_kubernetes_namespace]action: replacetarget_label: kubernetes_namespace- source_labels: [__meta_kubernetes_pod_name]action: replacetarget_label: kubernetes_pod_name创建config:

$ kubectl create -f configmap.yaml

configmap/prometheus-config created$ kubectl get cm prometheus-config -n kube-system

NAME DATA AGE

prometheus-config 1 33s3.5、Deployment

创建prometheus的Pod资源清单文件:vim prometheus-deploy.yaml

## 下面就是yaml文件的具体配置内容

---

apiVersion: apps/v1

kind: Deployment

metadata:labels:name: prometheus-deploymentname: prometheusnamespace: kube-system

spec:replicas: 1selector:matchLabels:app: prometheustemplate:metadata:labels:app: prometheusspec:containers:- image: prom/prometheus:v2.0.0name: prometheuscommand:- "/bin/prometheus"args:- "--config.file=/etc/prometheus/prometheus.yml"- "--storage.tsdb.path=/prometheus"- "--storage.tsdb.retention=24h"ports:- containerPort: 9090protocol: TCPvolumeMounts:- mountPath: "/prometheus"name: data- mountPath: "/etc/prometheus"name: config-volumeresources:requests:cpu: 100mmemory: 100Milimits:cpu: 500mmemory: 2500MiserviceAccountName: prometheus volumes:- name: dataemptyDir: {}- name: config-volumeconfigMap:name: prometheus-config 创建prometheus pod:

$ kubectl create -f prometheus-deploy.yaml

deployment.apps/prometheus created$ kubectl get pod -A | grep prometheu

kube-system prometheus-ddf89874b-d8mbd 1/1 Running 0 76s3.6、Service

暴露prometheus,准备资源清单:vim prometheus-service.yaml

## 下面就是yaml文件的具体配置内容

---

kind: Service

apiVersion: v1

metadata:labels:app: prometheusname: prometheusnamespace: kube-system

spec:type: NodePortports:- port: 9090targetPort: 9090nodePort: 30003selector:app: prometheus创建service:

$ kubectl create -f prometheus-service.yaml

service/prometheus created$ kubectl get svc -n kube-system | grep prometheus

prometheus NodePort 10.96.216.242 <none> 9090:30003/TCP 32s3.7、验证Prometheus

$ kubectl get pod,svc -n kube-system | grep prometheus

pod/prometheus-ddf89874b-d8mbd 1/1 Running 0 4m20s

service/prometheus NodePort 10.96.216.242 <none> 9090:30003/TCP 96s 18s$ kubectl get DaemonSet -n kube-system | grep node-exporter

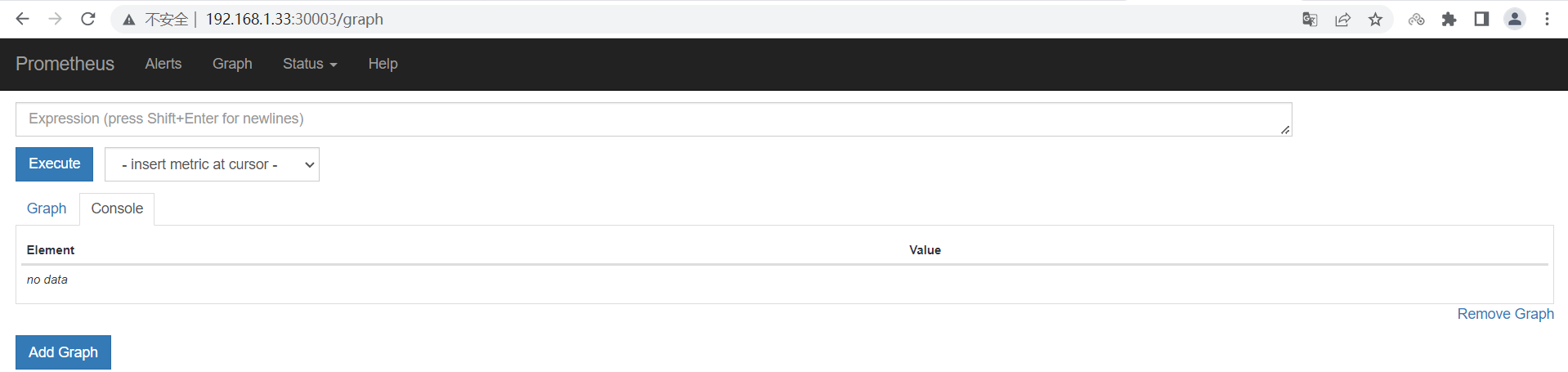

node-exporter 2 2 2 2 2 <none> 可以看到,成功启动了prometheus的pod和service,prometheus对外暴露的端口是30003,且安装在192.168.1.33这台机器上。在浏览器通过[ip:port]访问http://192.168.1.33:30003,如下图:

说明我们的Pormetheus已经搭建成功,接下来我们部署Grafana。

四、部署Grafana

Grafana官方文档地址:Documentation | Grafana Labs。

Grafana主要的一些特点:

-

- 开源的数据分析和可视化工具

- 支持多种数据源

在k8s集群监控平台中,Grafana的作用就是从Prometheus中读取数据,生成报表的形式进行数据可视化的功能。

4.1、Deployment

创建grafana的pod资源清单:vim grafana-deploy.yaml

## 下面就是yaml文件的具体配置内容

apiVersion: apps/v1

kind: Deployment

metadata:name: grafana-corenamespace: kube-systemlabels:app: grafanacomponent: core

spec:replicas: 1selector:matchLabels:app: grafanacomponent: coretemplate:metadata:labels:app: grafanacomponent: corespec:containers:- image: grafana/grafana:4.2.0name: grafana-coreimagePullPolicy: IfNotPresent# env:resources:# keep request = limit to keep this container in guaranteed classlimits:cpu: 100mmemory: 100Mirequests:cpu: 100mmemory: 100Mienv:# The following env variables set up basic auth twith the default admin user and admin password.- name: GF_AUTH_BASIC_ENABLEDvalue: "true"- name: GF_AUTH_ANONYMOUS_ENABLEDvalue: "false"# - name: GF_AUTH_ANONYMOUS_ORG_ROLE# value: Admin# does not really work, because of template variables in exported dashboards:# - name: GF_DASHBOARDS_JSON_ENABLED# value: "true"readinessProbe:httpGet:path: /loginport: 3000# initialDelaySeconds: 30# timeoutSeconds: 1volumeMounts:- name: grafana-persistent-storagemountPath: /varvolumes:- name: grafana-persistent-storageemptyDir: {}创建grafana的Pod:

$ kubectl create -f grafana-deploy.yaml

deployment.apps/grafana-core created$ kubectl get pod -n kube-system | grep grafana

grafana-core-7b7ccc7bcf-8lmhq 1/1 Running 0 2m29s4.2、Service

创建资源清单文件:vim grafana-service.yaml

## 下面就是yaml文件的具体配置内容

apiVersion: v1

kind: Service

metadata:name: grafananamespace: kube-systemlabels:app: grafanacomponent: core

spec:type: NodePortports:- port: 3000selector:app: grafanacomponent: core创建grafana Service暴露服务:

$ kubectl create -f grafana-service.yaml

service/grafana created4.3、Ingress

创建Ingress资源清单:vim grafana-ingress.yaml

## 下面就是yaml文件的具体配置内容

apiVersion: extensions/v1beta1

kind: Ingress

metadata:name: grafananamespace: kube-system

spec:rules:- host: k8s.grafanahttp:paths:- path: /backend:serviceName: grafanaservicePort: 3000创建ingress:

$ kubectl create -f grafana-ingress.yaml

Warning: extensions/v1beta1 Ingress is deprecated in v1.14+, unavailable in v1.22+; use networking.k8s.io/v1 Ingress

ingress.extensions/grafana created4.4、验证Grafana

$ kubectl get pod,svc -n kube-system | grep grafana

pod/grafana-core-85587c9c49-zqhhh 1/1 Running 0 76s

service/grafana NodePort 10.105.169.65 <none> 3000:30155/TCP 55s$ kubectl get ing -n kube-system | grep grafana

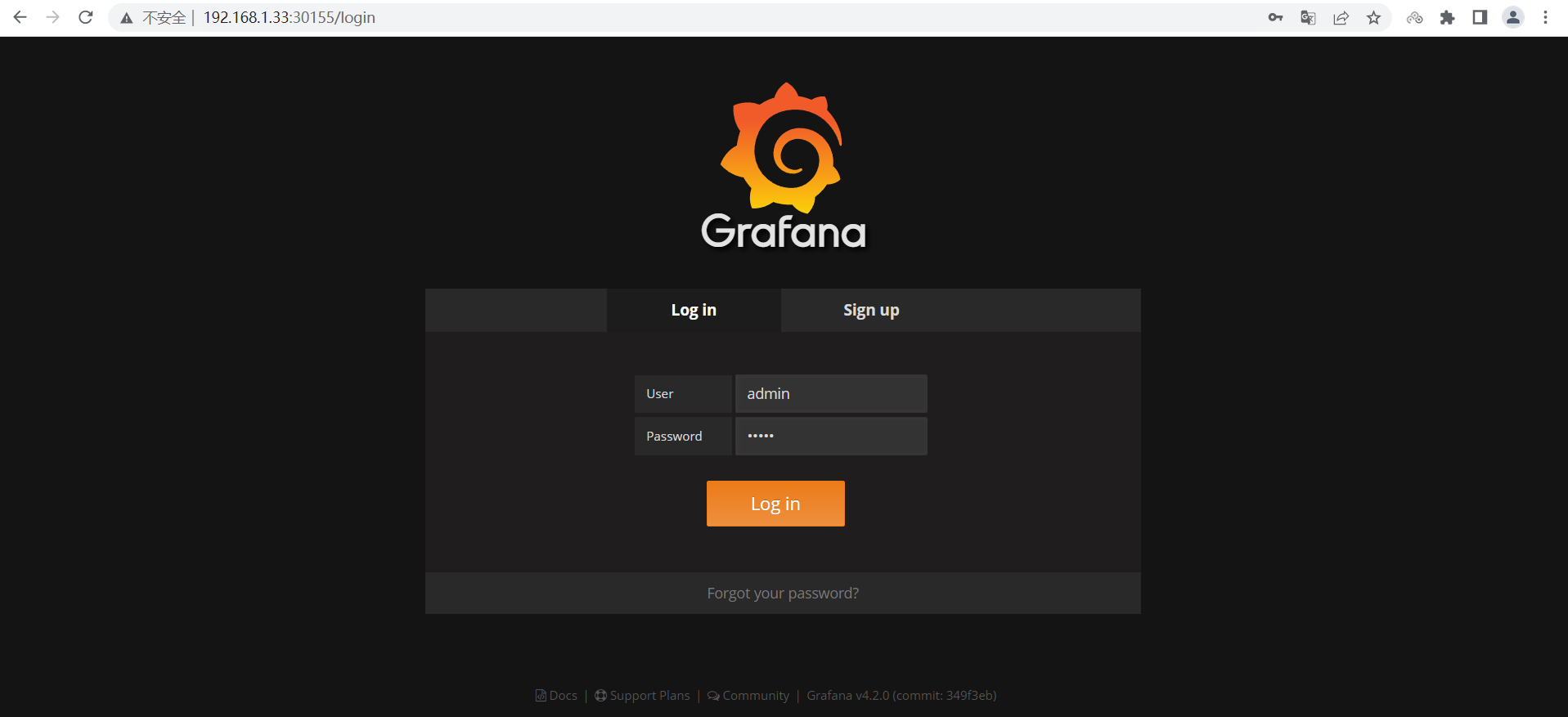

grafana <none> k8s.grafana 80 3h28m可以看到,grafana对外暴露的端口是30155,且安装在192.168.1.33这台机器上,所以我们通过浏览器访问:http://192.168.1.33:30155/,默认用户名/密码为:admin/admin:

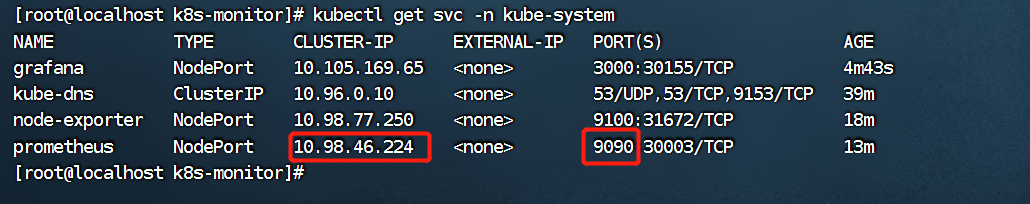

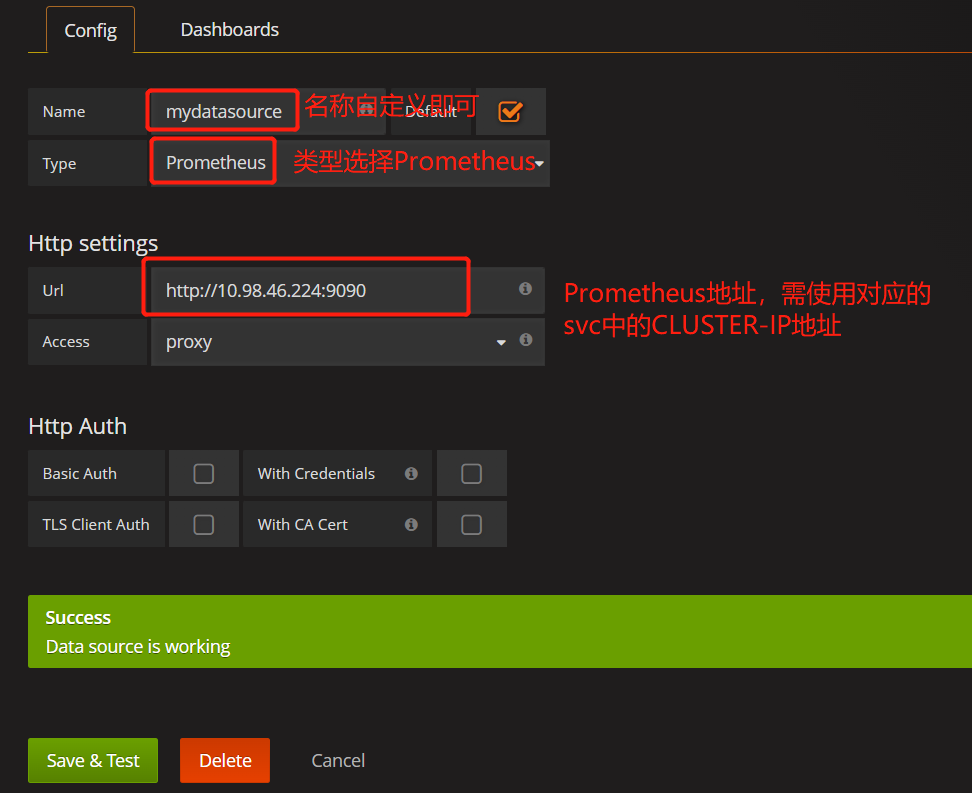

接下来我们需要添加数据源 Prometheus,注意,绑定Prometheus时,需要使用prometheus这个service的CLUSTER-IP和代理转发到容器的端口进行连接,即10.98.46.224:9090,如下图:

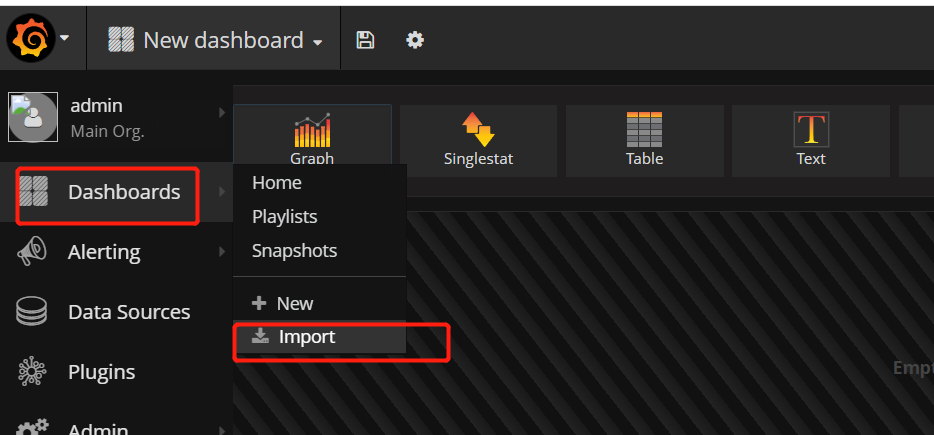

数据源添加完成后,我们导入内置报表模板:

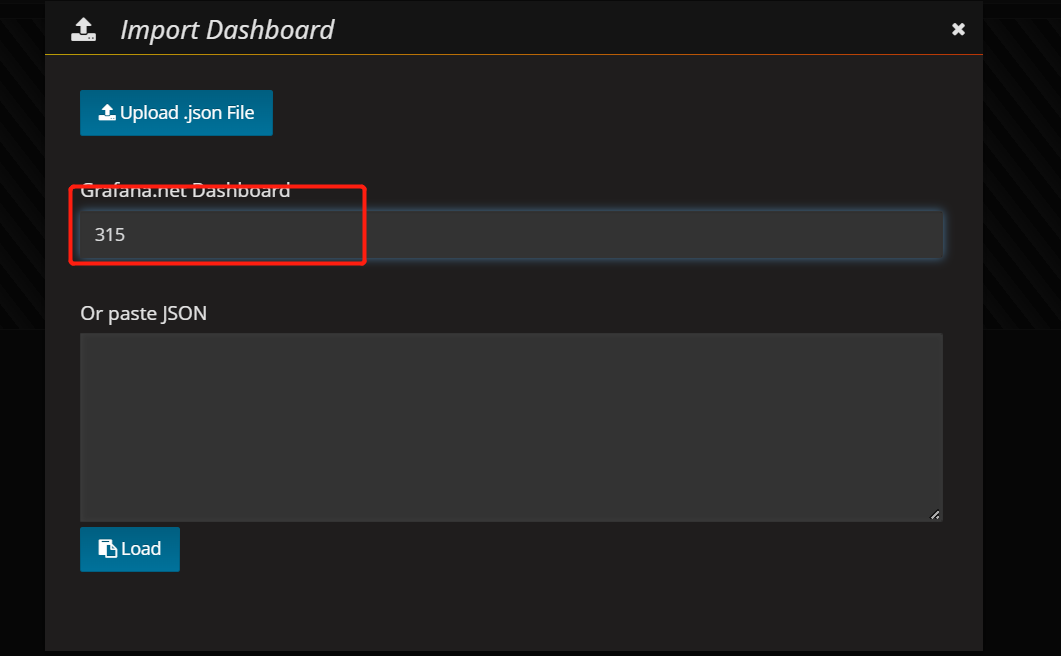

输入Prometheus网络模板ID,这里选择ID为315的模板进行统计:

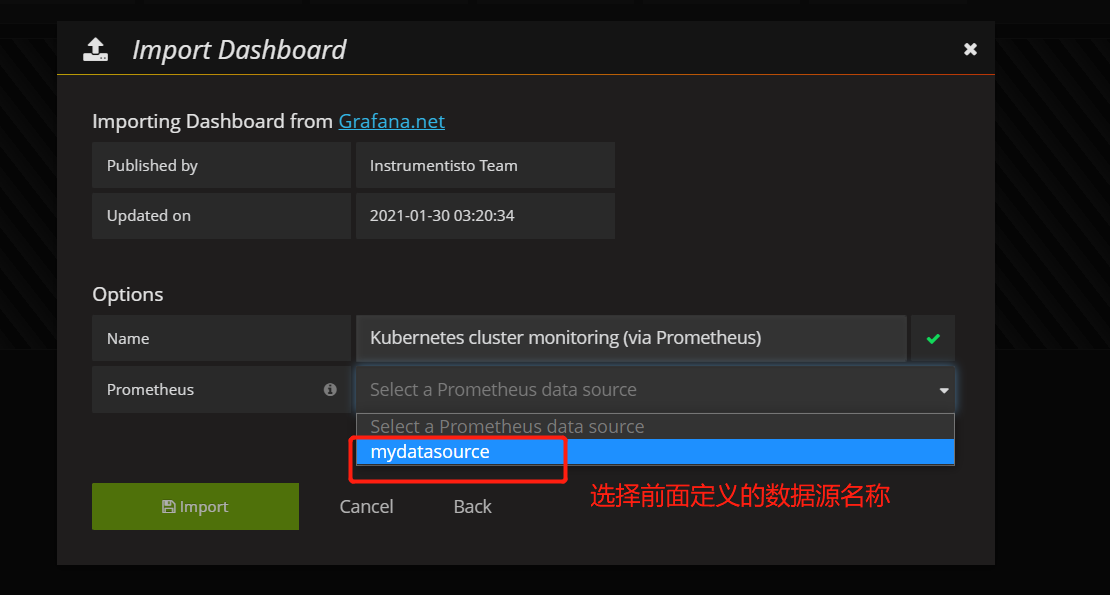

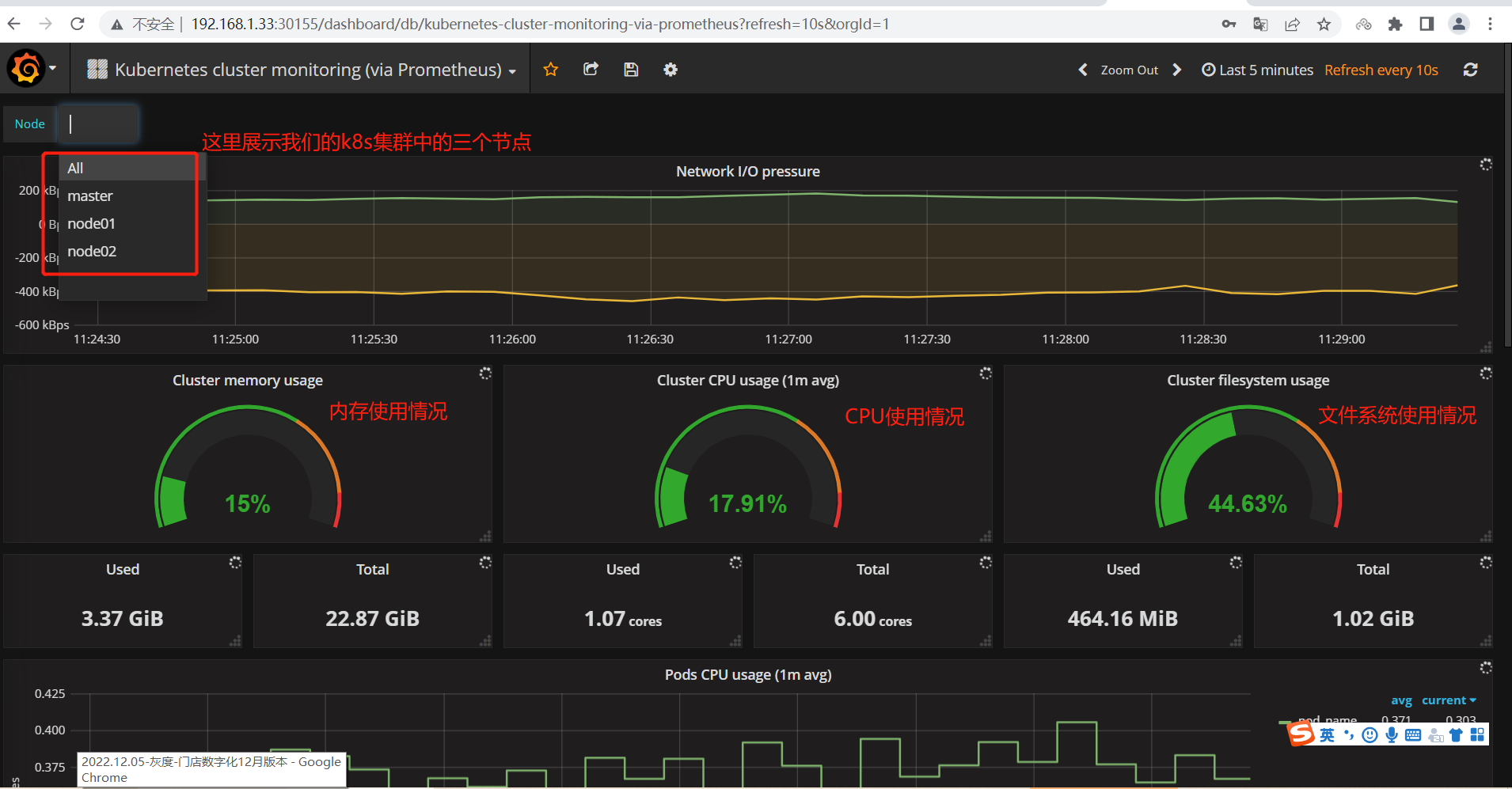

选择前面定义的数据源名称,本例中我们是mydatasource,并点击导入模板进行数据可视化:

至此,通过Prometheus结合Grafana实现了一个简单的k8s集群监控平台,当然,这里只是一个简单的演示,更多高级功能在需要用到的时候,再查看官网文档。

三、代码)

重复的子字符串)

)

)