文章目录

- 一、前言

- 二、前期工作

- 1. 设置GPU(如果使用的是CPU可以忽略这步)

- 2. 导入数据

- 3. 查看数据

- 4.标签数字化

- 二、构建一个tf.data.Dataset

- 1.预处理函数

- 2.加载数据

- 3.配置数据

- 三、搭建网络模型

- 四、编译

- 五、训练

- 六、模型评估

- 七、保存和加载模型

- 八、预测

一、前言

我的环境:

- 语言环境:Python3.6.5

- 编译器:jupyter notebook

- 深度学习环境:TensorFlow2.4.1

往期精彩内容:

- 卷积神经网络(CNN)实现mnist手写数字识别

- 卷积神经网络(CNN)多种图片分类的实现

- 卷积神经网络(CNN)衣服图像分类的实现

- 卷积神经网络(CNN)鲜花识别

- 卷积神经网络(CNN)天气识别

- 卷积神经网络(VGG-16)识别海贼王草帽一伙

- 卷积神经网络(ResNet-50)鸟类识别

- 卷积神经网络(AlexNet)鸟类识别

来自专栏:机器学习与深度学习算法推荐

二、前期工作

1. 设置GPU(如果使用的是CPU可以忽略这步)

import tensorflow as tfgpus = tf.config.list_physical_devices("GPU")if gpus:tf.config.experimental.set_memory_growth(gpus[0], True) #设置GPU显存用量按需使用tf.config.set_visible_devices([gpus[0]],"GPU")

2. 导入数据

import matplotlib.pyplot as plt

# 支持中文

plt.rcParams['font.sans-serif'] = ['SimHei'] # 用来正常显示中文标签

plt.rcParams['axes.unicode_minus'] = False # 用来正常显示负号import os,PIL,random,pathlib# 设置随机种子尽可能使结果可以重现

import numpy as np

np.random.seed(1)# 设置随机种子尽可能使结果可以重现

import tensorflow as tf

tf.random.set_seed(1)

data_dir = "code"

data_dir = pathlib.Path(data_dir)all_image_paths = list(data_dir.glob('*'))

all_image_paths = [str(path) for path in all_image_paths]# 打乱数据

random.shuffle(all_image_paths)# 获取数据标签

all_label_names = [path.split("\\")[5].split(".")[0] for path in all_image_paths]image_count = len(all_image_paths)

print("图片总数为:",image_count)

3. 查看数据

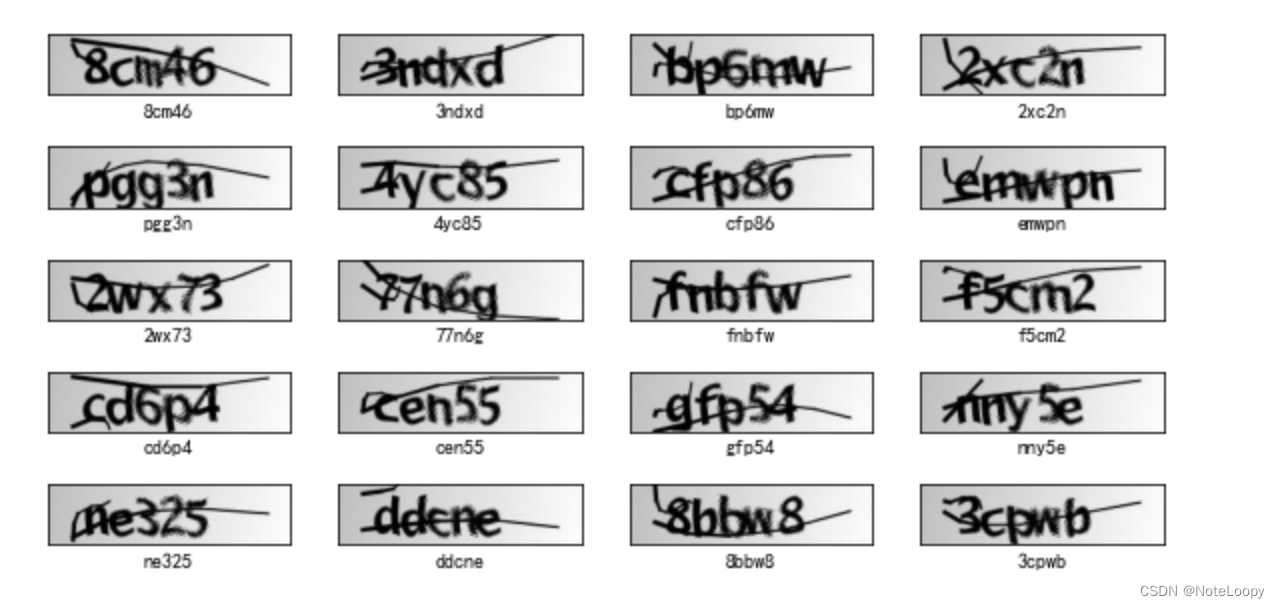

plt.figure(figsize=(10,5))for i in range(20):plt.subplot(5,4,i+1)plt.xticks([])plt.yticks([])plt.grid(False)# 显示图片images = plt.imread(all_image_paths[i])plt.imshow(images)# 显示标签plt.xlabel(all_label_names[i])plt.show()

4.标签数字化

number = ['0', '1', '2', '3', '4', '5', '6', '7', '8', '9']

alphabet = ['a','b','c','d','e','f','g','h','i','j','k','l','m','n','o','p','q','r','s','t','u','v','w','x','y','z']

char_set = number + alphabet

char_set_len = len(char_set)

label_name_len = len(all_label_names[0])# 将字符串数字化

def text2vec(text):vector = np.zeros([label_name_len, char_set_len])for i, c in enumerate(text):idx = char_set.index(c)vector[i][idx] = 1.0return vectorall_labels = [text2vec(i) for i in all_label_names]

二、构建一个tf.data.Dataset

1.预处理函数

def preprocess_image(image):image = tf.image.decode_jpeg(image, channels=1)image = tf.image.resize(image, [50, 200])return image/255.0def load_and_preprocess_image(path):image = tf.io.read_file(path)return preprocess_image(image)

2.加载数据

构建 tf.data.Dataset 最简单的方法就是使用 from_tensor_slices 方法。

AUTOTUNE = tf.data.experimental.AUTOTUNEpath_ds = tf.data.Dataset.from_tensor_slices(all_image_paths)

image_ds = path_ds.map(load_and_preprocess_image, num_parallel_calls=AUTOTUNE)

label_ds = tf.data.Dataset.from_tensor_slices(all_labels)image_label_ds = tf.data.Dataset.zip((image_ds, label_ds))

image_label_ds

<ZipDataset shapes: ((50, 200, 1), (5, 36)), types: (tf.float32, tf.float64)>

train_ds = image_label_ds.take(1000) # 前1000个batch

val_ds = image_label_ds.skip(1000) # 跳过前1000,选取后面的

3.配置数据

先复习一下prefetch()函数。prefetch()功能详细介绍:CPU 正在准备数据时,加速器处于空闲状态。相反,当加速器正在训练模型时,CPU 处于空闲状态。因此,训练所用的时间是 CPU 预处理时间和加速器训练时间的总和。prefetch()将训练步骤的预处理和模型执行过程重叠到一起。当加速器正在执行第 N 个训练步时,CPU 正在准备第 N+1 步的数据。这样做不仅可以最大限度地缩短训练的单步用时(而不是总用时),而且可以缩短提取和转换数据所需的时间。如果不使用prefetch(),CPU 和 GPU/TPU 在大部分时间都处于空闲状态:

BATCH_SIZE = 16train_ds = train_ds.batch(BATCH_SIZE)

train_ds = train_ds.prefetch(buffer_size=AUTOTUNE)val_ds = val_ds.batch(BATCH_SIZE)

val_ds = val_ds.prefetch(buffer_size=AUTOTUNE)

val_ds

三、搭建网络模型

from tensorflow.keras import datasets, layers, modelsmodel = models.Sequential([layers.Conv2D(32, (3, 3), activation='relu', input_shape=(50, 200, 1)),#卷积层1,卷积核3*3layers.MaxPooling2D((2, 2)), #池化层1,2*2采样layers.Conv2D(64, (3, 3), activation='relu'), #卷积层2,卷积核3*3layers.MaxPooling2D((2, 2)), #池化层2,2*2采样layers.Flatten(), #Flatten层,连接卷积层与全连接层layers.Dense(1000, activation='relu'), #全连接层,特征进一步提取layers.Dense(label_name_len * char_set_len),layers.Reshape([label_name_len, char_set_len]),layers.Softmax() #输出层,输出预期结果

])

# 打印网络结构

model.summary()

Model: "sequential"

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

conv2d (Conv2D) (None, 48, 198, 32) 320

_________________________________________________________________

max_pooling2d (MaxPooling2D) (None, 24, 99, 32) 0

_________________________________________________________________

conv2d_1 (Conv2D) (None, 22, 97, 64) 18496

_________________________________________________________________

max_pooling2d_1 (MaxPooling2 (None, 11, 48, 64) 0

_________________________________________________________________

flatten (Flatten) (None, 33792) 0

_________________________________________________________________

dense (Dense) (None, 1000) 33793000

_________________________________________________________________

dense_1 (Dense) (None, 180) 180180

_________________________________________________________________

reshape (Reshape) (None, 5, 36) 0

_________________________________________________________________

softmax (Softmax) (None, 5, 36) 0

=================================================================

Total params: 33,991,996

Trainable params: 33,991,996

Non-trainable params: 0

_________________________________________________________________

四、编译

model.compile(optimizer="adam",loss='categorical_crossentropy',metrics=['accuracy'])

五、训练

epochs = 20history = model.fit(train_ds,validation_data=val_ds,epochs=epochs

)

Epoch 1/20

63/63 [==============================] - 4s 21ms/step - loss: 3.2998 - accuracy: 0.0934 - val_loss: 2.2876 - val_accuracy: 0.2943

Epoch 2/20

63/63 [==============================] - 1s 9ms/step - loss: 1.7016 - accuracy: 0.5195 - val_loss: 1.2014 - val_accuracy: 0.6314

Epoch 3/20

63/63 [==============================] - 1s 10ms/step - loss: 0.5267 - accuracy: 0.8379 - val_loss: 0.9039 - val_accuracy: 0.7286

Epoch 4/20

63/63 [==============================] - 1s 10ms/step - loss: 0.1911 - accuracy: 0.9442 - val_loss: 0.8609 - val_accuracy: 0.7457

Epoch 5/20

63/63 [==============================] - 1s 10ms/step - loss: 0.0916 - accuracy: 0.9714 - val_loss: 0.8937 - val_accuracy: 0.7886

Epoch 6/20

63/63 [==============================] - 1s 10ms/step - loss: 0.0680 - accuracy: 0.9798 - val_loss: 0.5842 - val_accuracy: 0.8429

Epoch 7/20

63/63 [==============================] - 1s 10ms/step - loss: 0.0443 - accuracy: 0.9900 - val_loss: 0.6235 - val_accuracy: 0.8200

Epoch 8/20

63/63 [==============================] - 1s 10ms/step - loss: 0.0203 - accuracy: 0.9947 - val_loss: 0.7697 - val_accuracy: 0.8029

Epoch 9/20

63/63 [==============================] - 1s 10ms/step - loss: 0.0131 - accuracy: 0.9975 - val_loss: 0.6660 - val_accuracy: 0.8314

Epoch 10/20

63/63 [==============================] - 1s 10ms/step - loss: 0.0227 - accuracy: 0.9940 - val_loss: 0.6018 - val_accuracy: 0.8229

Epoch 11/20

63/63 [==============================] - 1s 10ms/step - loss: 0.0093 - accuracy: 0.9985 - val_loss: 0.5714 - val_accuracy: 0.8429

Epoch 12/20

63/63 [==============================] - 1s 10ms/step - loss: 0.0010 - accuracy: 1.0000 - val_loss: 0.5793 - val_accuracy: 0.8571

Epoch 13/20

63/63 [==============================] - 1s 10ms/step - loss: 2.6284e-04 - accuracy: 1.0000 - val_loss: 0.5920 - val_accuracy: 0.8571

Epoch 14/20

63/63 [==============================] - 1s 10ms/step - loss: 1.8502e-04 - accuracy: 1.0000 - val_loss: 0.6031 - val_accuracy: 0.8571

Epoch 15/20

63/63 [==============================] - 1s 10ms/step - loss: 1.4164e-04 - accuracy: 1.0000 - val_loss: 0.6120 - val_accuracy: 0.8571

Epoch 16/20

63/63 [==============================] - 1s 10ms/step - loss: 1.1334e-04 - accuracy: 1.0000 - val_loss: 0.6198 - val_accuracy: 0.8571

Epoch 17/20

63/63 [==============================] - 1s 10ms/step - loss: 9.4027e-05 - accuracy: 1.0000 - val_loss: 0.6269 - val_accuracy: 0.8571

Epoch 18/20

63/63 [==============================] - 1s 10ms/step - loss: 8.0025e-05 - accuracy: 1.0000 - val_loss: 0.6335 - val_accuracy: 0.8514

Epoch 19/20

63/63 [==============================] - 1s 9ms/step - loss: 6.9294e-05 - accuracy: 1.0000 - val_loss: 0.6396 - val_accuracy: 0.8486

Epoch 20/20

63/63 [==============================] - 1s 10ms/step - loss: 6.0775e-05 - accuracy: 1.0000 - val_loss: 0.6448 - val_accuracy: 0.8486

六、模型评估

acc = history.history['accuracy']

val_acc = history.history['val_accuracy']loss = history.history['loss']

val_loss = history.history['val_loss']epochs_range = range(epochs)plt.figure(figsize=(12, 4))

plt.subplot(1, 2, 1)plt.plot(epochs_range, acc, label='Training Accuracy')

plt.plot(epochs_range, val_acc, label='Validation Accuracy')

plt.legend(loc='lower right')

plt.title('Training and Validation Accuracy')plt.subplot(1, 2, 2)

plt.plot(epochs_range, loss, label='Training Loss')

plt.plot(epochs_range, val_loss, label='Validation Loss')

plt.legend(loc='upper right')

plt.title('Training and Validation Loss')

plt.show()

七、保存和加载模型

# 保存模型

model.save('model/12_model.h5')

# 加载模型

new_model = tf.keras.models.load_model('model/12_model.h5')

八、预测

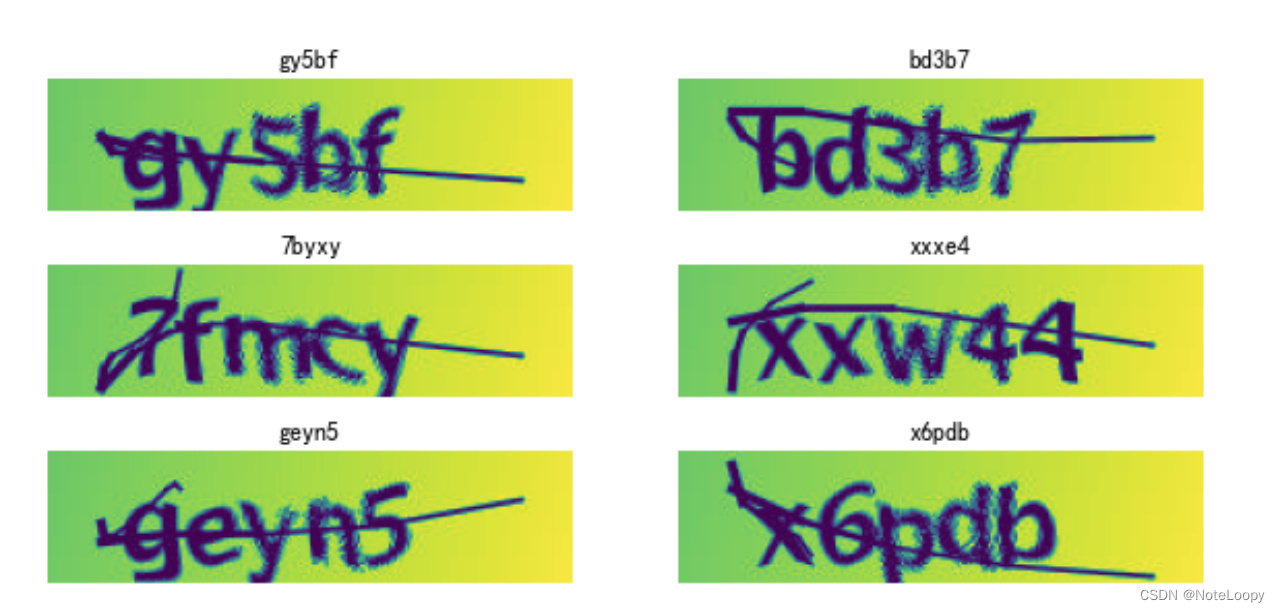

def vec2text(vec):"""还原标签(向量->字符串)"""text = []for i, c in enumerate(vec):text.append(char_set[c])return "".join(text)plt.figure(figsize=(10, 8)) # 图形的宽为10高为8for images, labels in val_ds.take(1):for i in range(6):ax = plt.subplot(5, 2, i + 1) # 显示图片plt.imshow(images[i])# 需要给图片增加一个维度img_array = tf.expand_dims(images[i], 0) # 使用模型预测验证码predictions = model.predict(img_array)plt.title(vec2text(np.argmax(predictions, axis=2)[0]))plt.axis("off")

——视频文件信息获取)

进行去重)

:树和森林的遍历——层次遍历(LevelOrder))